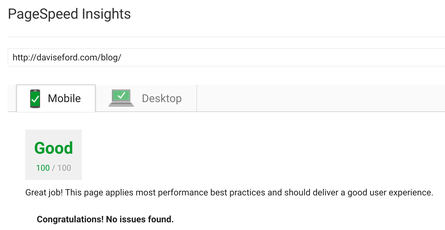

How I Achieved A Perfect 100/100 Google PageSpeed Score

07 Jun 2017

Here’s my take on how to get 100/100 on Google’s PageSpeed tool.

My dirtiest trick (how to deal with Google Analytics code affecting your score) is at the very bottom of this article.

Keep in mind, not all of these techniques are necessarily very fun to implement or use.

I’m using Jekyll to generate my static site, which is hosted on AWS EC2 t2.micro instance.

Basic Tips

- Minify your CSS/HTML/JS

- Inline your critical CSS as needed

- Compress images

- Move all javascript and stylesheets to your footer

- Remove as much clutter as you can from your page

CSS Tips (asynchronous Bootstrap loading)

The most important things you need to do: minify your css! Include as much of your CSS as you can inline, Google loves that.

I used Bootstrap 4 for this project, mainly just to play with it. Here’s quick hack for asynchronously loading Bootstrap after page load - Google will penalize you unless you do some variation of this.

<!-- Async Bootstrap Loading - add this to your footer -->

<script async>

var cb = function () {

var l = document.createElement('link');

l.rel = 'stylesheet';

l.href = 'https://maxcdn.bootstrapcdn.com/bootstrap/4.0.0-alpha.6/css/bootstrap.min.css';

var h = document.getElementsByTagName('head')[0];

h.parentNode.insertBefore(l, h);

};

var raf = requestAnimationFrame || mozRequestAnimationFrame || webkitRequestAnimationFrame || msRequestAnimationFrame;

if (raf) raf(cb);

else window.addEventListener('load', cb);

</script>

<noscript>

<link rel="stylesheet"

href="https://maxcdn.bootstrapcdn.com/bootstrap/4.0.0-alpha.6/css/bootstrap.min.css"

integrity="sha384-rwoIResjU2yc3z8GV/NPeZWAv56rSmLldC3R/AZzGRnGxQQKnKkoFVhFQhNUwEyJ"

crossorigin="anonymous">

</noscript>This will load Bootstrap after the page has loaded - beware, this is going to give you the unholy FOUC (Flash Of Unstyled Content). Although Google slurps this up, and will reward you for it, I really think the FOUC is ugly. Yes, there are ways around it (tweaking display settings on the container), but it’s just so ugly.

Build Script

Here’s my custom build script for creating thumbnails automatically, compressing images, building the site with Jekyll, and finally uploading to AWS S3 (the EC2 instance polls the S3 bucket for changes every few minutes)

The script assumes you keep all of your images in the ./public/img/ directory. It will create a thumbnails directory with all photos resized to be no wider than 445px.

The script then compresses all of the images (to Google’s liking) in public/img/, and runs the Jekyll build script.

After the site is built, it’s uploaded with the AWS CLI to an S3 bucket. Note that we enable the --size-only flag - since Jekyll regenerates things all the time, this will stop AWS CLI from blindly updating identical files that simply have different timestamps.

I know some people use jekyll-assets for this, but the setup and configuration turned me off.

#!/bin/bash

THUMBNAIL_DIR='/tmp/thumbnails/'

# Google-recommended defaults

# https://developers.google.com/speed/docs/insights/OptimizeImages

jpg_OPTS='-resize 445 -sampling-factor 4:2:0 -strip'

PNG_OPTS='-resize 445 -strip'

# Housekeeping

rm -rf ${THUMBNAIL_DIR}

mkdir ${THUMBNAIL_DIR}

# Move images to /tmp/

rsync -a --exclude '*thumbnails/*' './public/img/' ${THUMBNAIL_DIR}

# Resize thumbs

find ${THUMBNAIL_DIR} -type f -iname '*.jpg' -exec mogrify $jpg_OPTS {} \;

find ${THUMBNAIL_DIR} -type f -iname '*.jpeg' -exec mogrify $jpg_OPTS {} \;

find ${THUMBNAIL_DIR} -type f -iname '*.png' -exec mogrify $PNG_OPTS {} \;

# Move tmp thumbnails into the directory - only overwrite if size is different

rsync -r --size-only --delete ${THUMBNAIL_DIR} './public/img/thumbnails/'

rm -rf ${THUMBNAIL_DIR}

echo "Thumbnails done."

# Compress images and thumbs

find ./public/img/ -type f -iname '*.jpg' -exec jpegoptim --strip-all --max=85 {} \;

find ./public/img/ -type f -iname '*.jpeg' -exec jpegoptim --strip-all --max=85 {} \;

find ./public/img/ -type f -iname '*.png' -print0 | xargs -0 optipng -o7

echo "Images compressed"

# Build latest

bundle exec jekyll build

# Upload our site to our S3 bucket

aws s3 sync --delete --size-only ./_site/ s3://my-s3-bucket/blog

echo "Done uploading"This script is perhaps a bit overkill, but the main aspect (thumbnail creation and Google-approved image compression) allows me to use this snippet in Jekyll for thumbnails:

{% capture ImgPath %}{{ site.baseurl }}/public/img/{{ include.path }}{% endcapture %}

{% capture ThumbPath %}{{ site.baseurl }}/public/img/thumbnails/{{ include.path }}{% endcapture %}

{% if include.caption %}

<figure>

<a href="{{ ImgPath }}" target="_blank">

<img src="{{ ThumbPath }}" {% if include.alt %} alt="{{ include.alt }}" {% endif %} {% if include.width %} width="{{ include.width }}" {% endif %} {% if include.class %} class="{{ include.class }}" {% endif %}/>

</a>

<figcaption>{{ include.caption }}</figcaption>

</figure>

{% else %}

<a href="{{ ImgPath }}" target="_blank">

<img src="{{ ThumbPath }}" {% if include.alt %} alt="{{ include.alt }}" {% endif %} {% if include.width %} width="{{ include.width }}" {% endif %} {% if include.class %} class="{{ include.class }}" {% endif %}/>

</a>

{% endif %}Which you would use like this:

{% include thumbnail.html path="le-mans/IMG_0145.jpg" class="img-thumbnail" alt="1967 Pontiac Le Mans" %}This piece of code lets me easily display inline pictures without worrying about massive original image sizes.

My httpd.conf file

The main settings you’re interested in, from my httpd-conf file, located in /etc/httpd/conf/

These settings will address most of Google’s grievances with Expiration tags and browser caching.

## Deal with the Vary: Accept-Encoding demand

## Updated Jan 28, 2019, thanks to reader Arno G., who helpfully pointed out that I needed to escape the period.

<IfModule mod_headers.c>

<FilesMatch "\.(js|css|xml|gz|html)$">

Header append Vary: Accept-Encoding

</FilesMatch>

</IfModule>

## EXPIRES CACHING ##

<IfModule mod_expires.c>

ExpiresActive On

ExpiresByType image/jpg "access plus 1 year"

ExpiresByType image/jpeg "access plus 1 year"

ExpiresByType image/gif "access plus 1 year"

ExpiresByType image/png "access plus 1 year"

ExpiresByType text/css "access plus 1 month"

ExpiresByType application/pdf "access plus 1 month"

ExpiresByType text/x-javascript "access plus 1 month"

ExpiresByType application/x-shockwave-flash "access plus 1 month"

ExpiresByType image/x-icon "access plus 1 year"

ExpiresDefault "access plus 2 days"

</IfModule>

<IfModule mod_deflate.c>

# Force compression for mangled `Accept-Encoding` request headers

# https://developer.yahoo.com/blogs/ydn/pushing-beyond-gzipping-25601.html

<IfModule mod_setenvif.c>

<IfModule mod_headers.c>

SetEnvIfNoCase ^(Accept-EncodXng|X-cept-Encoding|X{15}|~{15}|-{15})$ ^((gzip|deflate)\s*,?\s*)+|[X~-]{4,13}$ HAVE_Accept-Encoding

RequestHeader append Accept-Encoding "gzip,deflate" env=HAVE_Accept-Encoding

</IfModule>

</IfModule>

<IfModule mod_filter.c>

AddOutputFilterByType DEFLATE "application/atom+xml" \

"application/javascript" \

"application/json" \

"application/ld+json" \

"application/manifest+json" \

"application/rdf+xml" \

"application/rss+xml" \

"application/schema+json" \

"application/vnd.geo+json" \

"application/vnd.ms-fontobject" \

"application/x-font-ttf" \

"application/x-javascript" \

"application/x-web-app-manifest+json" \

"application/xhtml+xml" \

"application/xml" \

"font/eot" \

"font/opentype" \

"image/bmp" \

"image/svg+xml" \

"image/svg+xml" \

"image/vnd.microsoft.icon" \

"image/x-icon" \

"text/cache-manifest" \

"text/css" \

"text/html" \

"text/javascript" \

"text/plain" \

"text/vcard" \

"text/vnd.rim.location.xloc" \

"text/vtt" \

"text/x-component" \

"text/x-cross-domain-policy" \

"text/xml"

</IfModule>

<IfModule mod_mime.c>

AddEncoding gzip svgz

</IfModule>

</IfModule>

## Unset cookies for the "image" folder

## Updated Jan 28, 2019 per a note from Arno G., quoted here:

## "If you want to match based on request URL, you’ll want to use `SetEnvIf Request_URI`, not `SetEnvIf mime`

## (match if the mime-type of the requested resource is `image/jpg`, `image/png`, etc…)."

## I was using this incorrectly before, and attempting match on a URL

<IfModule mod_headers.c>

<IfModule mod_setenvif.c>

SetEnvIf mime image/* unset-cookie

Header unset Set-Cookie env=unset-cookie

</IfModule>

</IfModule>My sneakiest trick of all - Dealing with Google Analytics

Okay, so you are probably running the Google Analytics code on your site to track visitors. The problem is that Google PageSpeed will throw a fit about redirects and leveraging browser caching when it encounters the Google Analytics script.

Some authors have gone to the lengths of downloading the GA.js every day to their server with a cronjob, then serving that file locally.

I’d rather just get straight to the point:

<!-- Google Analytics -->

<script>

if (navigator.userAgent.indexOf("Speed Insights") === -1) {

(function (i, s, o, g, r, a, m) {

i['GoogleAnalyticsObject'] = r;

i[r] = i[r] || function () {

(i[r].q = i[r].q || []).push(arguments)

}, i[r].l = 1 * new Date();

a = s.createElement(o),

m = s.getElementsByTagName(o)[0];

a.async = 1;

a.src = g;

m.parentNode.insertBefore(a, m)

})(window, document, 'script', '//www.google-analytics.com/analytics.js', 'ga');

ga('create', 'YOUR_USER_ID', 'auto');

ga('send', 'pageview');

}

</script>Yup, we just disable the script if the User Agent indicates Speed Insights. Cheating? Sure, kinda. But the other fixes for it are just as hacky, and a way bigger pain in the ass.

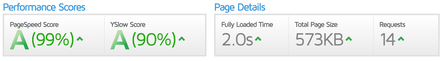

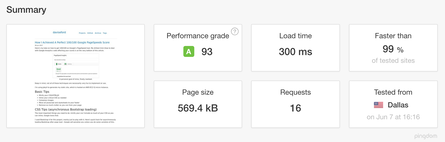

Some other useful tools for testing pagespeed are Pingdom, YSlow and GTMetrix! They will all ding you on various measures (GTMetrix really, really wants you to use a CDN), but they are a great way of seeing how your site performs.

Here are some sample results:

Those stats aren’t half-shabby - keep in mind at the time of testing, I had 12 images on the front page, which accounted for most of the page weight. I could have cheated and hidden pictures, but these are more real-world results.

Thanks for reading. I hope I’ve helped you in some small way on your way to reducing your page load time.

Related Posts

Helpful Bash Script - git reset all

22 Sep 2021Get a Pull Request by Number - Bash Function

22 Sep 2021Creating Effective Presentations with stock Apple applications

21 Sep 2021Air Cooling a Buttkicker Gamer 2

27 Feb 2021